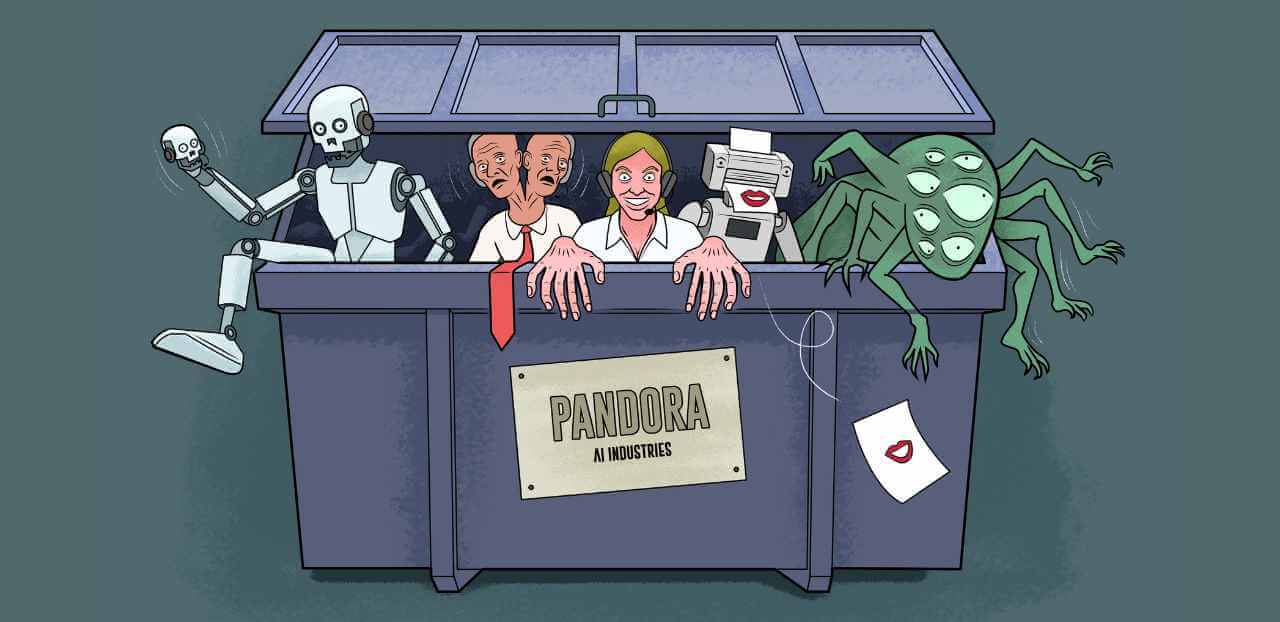

Why AI projects fail

Author: Lotte Hart & Nena Roes | Image: Ricky Booms | 07-04-2026

Research shows time and again that the grand promises of generative AI projects have not yet been fulfilled. Figures and success criteria vary by study - it makes quite a difference whether you look at return on investment, employee adoption, budget overruns, or efficiency gains - but the general picture that emerges is one of disappointment regarding the discrepancy between what we expect from GenAI and what it actually delivers. According to all these studies, the cause of this failure is not the technology itself, but a combination of organizational and human factors.

What in fact is the problem?

The main factor for failure, as we see it in the companies where we operate, is miscommunication about what exactly the problem is that is to be solved using AI. When an organization states that it wants to do ‘something with AI,’ it is rarely a choice driven by business strategy, but more often a reaction to the fear of falling behind. Many companies, for example, hope to achieve efficiency gains through an AI initiative, as that is the image AI has gained in broad circles. This triggers a feeling of ‘we need to do something with this,’ without first considering important underlying questions: does this focus on efficiency align with our corporate culture? Would we not be better off increasing profits by offering the best service? What is financially or organizationally feasible? Where are we already making a real impact, and can AI help amplify that impact?

Organizations would do well to first clearly define the specific problems they want to solve – so-called use cases – before considering AI as a potential solution. To get to the core of the matter, it helps to ask some ‘W-questions.’ Why do we want to work more efficiently? Why do we want to save costs? Why is AI the best solution for this? What can go wrong if we do nothing, and who will suffer from that? Identifying the underlying problem in this way during the initial phase of an AI project significantly reduces the risk of disappointment later on.

A convergence of failure factors

Once it is clear exactly which problem AI is supposed to solve, it can still fail for all sorts of other reasons. For example, organizations sometimes enthusiastically embrace the technology without asking themselves whether the potential users (employees or customers) actually want it. In 2024, the Swedish fintech company Klarna announced that AI could take over a large part of their customer service. Later, the company acknowledged that it is indeed more nuanced, customers still need contact with real people. And while Salesforce CEO and co-founder Marc Benioff proclaimed that his AI agents were the ‘biggest breakthrough in tech,’ potential customers had no idea yet where such agents would actually add value. Technology is capable of tremendously much, but deploying it is only helpful if it addresses a need and truly advances your work or business.

Another pitfall is applying AI to large and complex problems for which insufficient data is available. When a situation is entirely new and little information exists, AI can offer limited support, because the models primarily learn from historical data. Cybersecurity, as an example, benefits greatly from AI for pattern recognition, but it cannot rely on it blindly as the threat landscape is constantly changing. Historical information can be valuable, but it also becomes outdated quickly. If AI is based primarily on old data, it therefore offers no guarantee that decisions or future scenarios will be effective against new attack techniques.

Data and data quality are two more reasons why quite a few AI projects fail. Sometimes there is simply insufficient (good) data to effectively train an AI model. This means a project to collect that data over an extended period will first need to be launched, which is of course much less exciting than doing ‘something fun’ with AI. Is the data even available, and is the data quality good enough?

Organizations always remain personally responsible for decisions made with the help of AI. You therefore need experienced people to verify the AI output, to ensure that the answers are truly in line with the facts and with the problem to be solved. That internal expertise is not always available. Organizations should in any case invest in AI literacy at various levels, from basic to system-specific knowledge. This is not a one-time training session, but an ongoing process.

It is advisable to make this a board agenda item and to invest in it structurally. Responsible handling of data and AI is a core skill in a world where it is becoming increasingly difficult to distinguish reliable information from noise.

From experimenting to managing

Now that AI projects are underway at virtually all companies, we see a new problem emerging. After a period of enthusiastic pilots and decentralized experiments, there has been a proliferation of small-scale solutions. While these may be useful locally, at the organizational level, the sheer number of individual agents makes it impossible to see the forest for the trees.

This raises new questions related to governance: how do employees navigate the abundance of tools without losing control? Are the data and AI accessible and usable, rather than hidden in systems that only one person understands? Is anyone responsible for the data and for the AI systems running on it? Is sensitive data properly protected, given the potential for misuse or abuse? Does the system make decisions in a way that we consider correct or ethically sound? Is the balance between compliance and results appropriate? For AI projects to be successful in the long term, organizations will need to build a robust governance model from the very beginning.

The GenAI Paradox

The reality is that the business case for many GenAI applications is difficult to justify. For repetitive tasks, it may be worth considering and investing in, but in many situations, building and maintaining a reliable model costs so much money, time, and effort that it will never become profitable. This is also known as the ‘GenAI paradox’: we are investing heavily and expecting miracles, but the tangible net return has yet to materialize. Does this mean we should avoid it? Certainly not. But it does mean that organizations do not have to blindly follow every new AI hype. It is better to closely monitor developments and selectively choose applications that truly add value to your own organization.

However, that does not mean we should not prepare for the future. We do not know the direction developments will take, but successful AI developments are built on a foundation of good data quality, AI-literate employees, clear ethical frameworks, and robust cybersecurity. Organizations that use the AI hype as an incentive to finally address data quality, governance, and AI literacy will emerge stronger. Regardless of how the technology evolves.

Beyond the hype

Like any new (technological) development, generative AI will necessarily go through the hype cycle: from the initial breakthrough, through a peak of inflated expectations, to a trough of disillusionment, to eventually stabilize on a plateau where its true positive and negative impacts become clear. It may take us decades to reach that plateau, but we could collectively learn faster by being more transparent and honest about what works and what falls short. Currently, every cost saving is quickly attributed to AI, even when barely substantiated.

In fact, we should do the opposite and share with each other what we have tried, what did not work or what fell short, and how much of our time, energy, and budgets it has consumed. For a CEO, it can be uncomfortable to admit that a costly pilot yielded little or nothing. Yet it is precisely at this stage that it is essential to acknowledge that even a well-designed project can fail, and that sharing knowledge about such failures is crucial for the further development of the technology.

Essay by Lotte Hart, partner at SeederDeBoer (responsible for data and AI) and Nena Roes, senior consultant at SeederDeBoer). Published in Management Scope 04 2026.